The Reactive Environment for Network Music Performance allows you to perform and interact with two remote performers, while taking advantage of our five special features:

Dynamic Volume, Dynamic Reverb, Musician Spatialization, Mix Control, Track Panning

You can now download the code (along with extensive installation instructions and a user manual) from my Github repository at:

http://github.com/delshimy/REMC

Disclaimer: While I have done my best to test the code extensively, I may have missed a thing or two. As a result, your feedback is invaluable! Feel free to contact me with any questions, comments or suggestions you may have

System Features

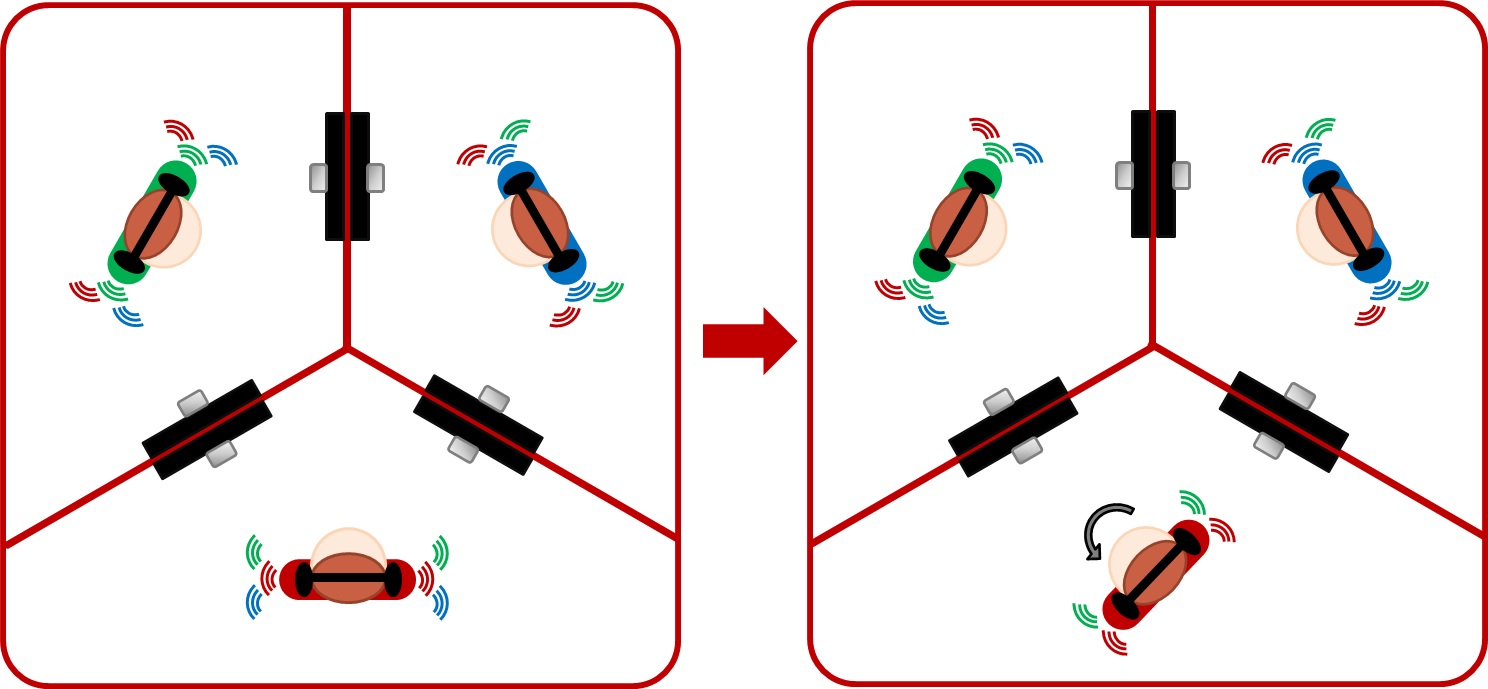

Dynamic Volume

Imagine a distributed performance setting where you can hear the other musicians via headphones, and see each of them on a separate monitor. As you approach the monitor on which you can see one of the remote musicians, you will be able to hear the volume of their instrument and/or vocals as gradually getting louder. They will also hear the volume of your instrument and/or vocals as getting louder. The converse holds true: as you move away from that monitor, both of you will experience each other’s volumes as getting quieter. This feature not only allows you to interact with the remote musicians and affect their sound, but also to create your individual mix based on your position in the room.

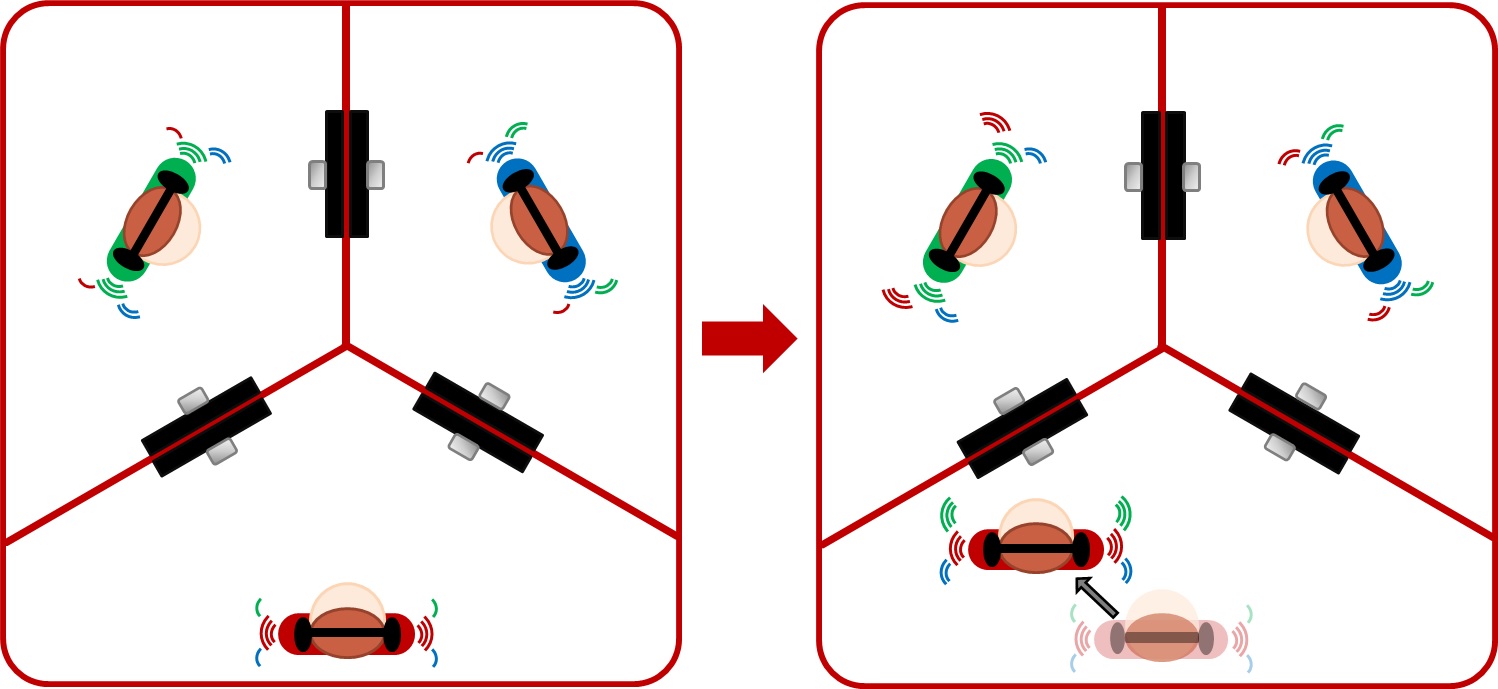

Dynamic Reverb

The Dynamic Reverb feature allows you and the other musicians to affect each other’s reverb levels by moving about your local spaces. As you move away from the monitor on which you can see one of the remote musicians, you will be able to hear the reverb levels of their instrument and/or vocals gradually increase. They will also hear your reverb levels increase. The converse holds true: as you move towards that monitor, both of you will experience each other’s reverb levels as decreasing. This feature helps you gain a sense of the dimension of the virtual space you and the remote musicians share together.

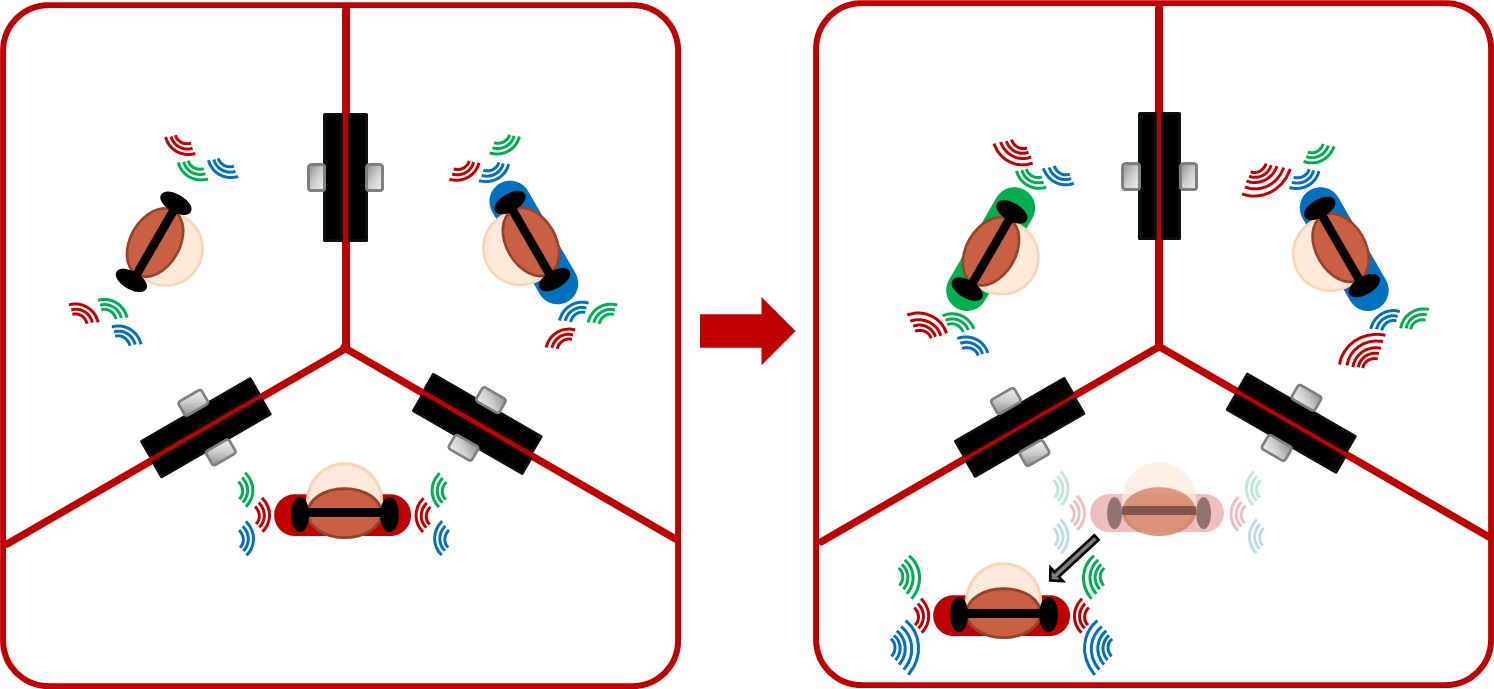

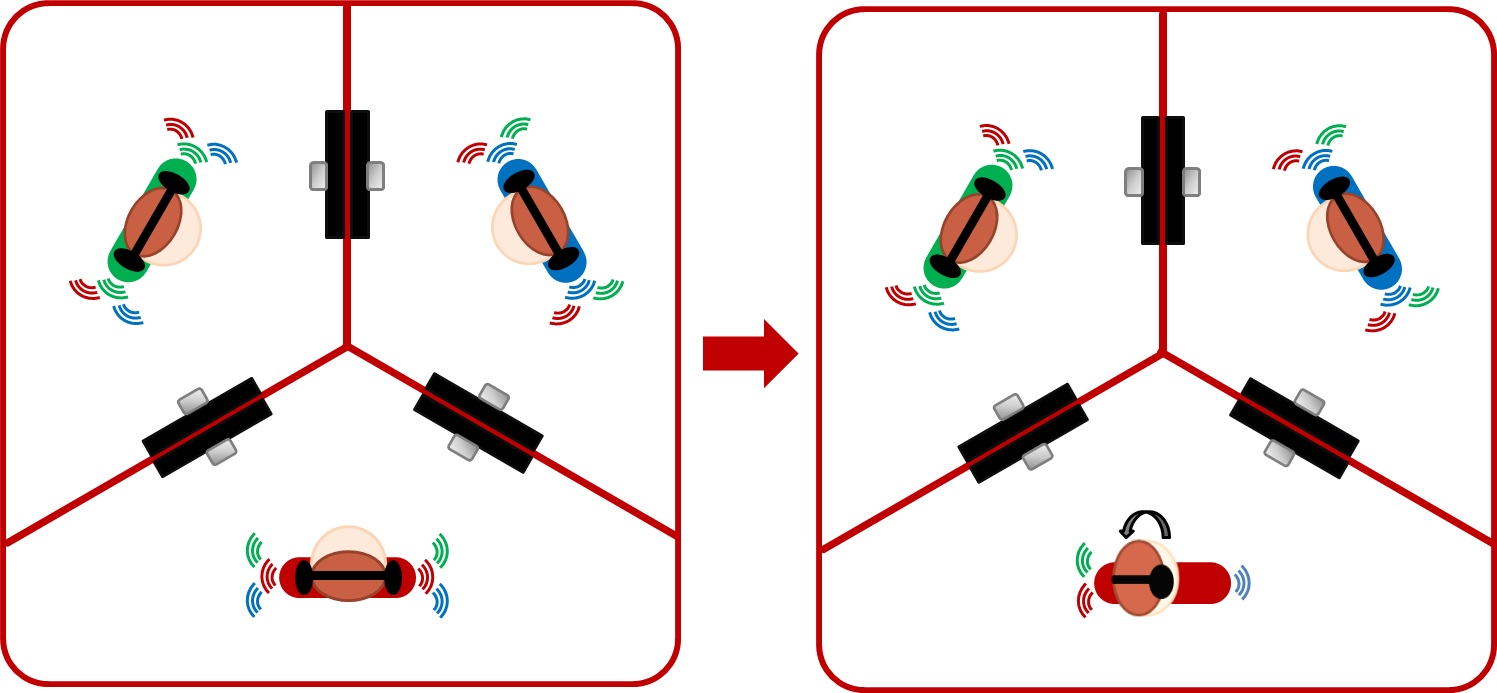

Musician Spatialization

The Musician Spatialization feature allows you to experience the remote musicians’ instruments as spatialized sound sources within his own, local space. For instance, when facing straight ahead, you will hear the musician whose monitor is on your left mostly through your left headphone, and the musician whose monitor is on your right mostly through your right headphone. This spatialization effect follows your head orientation, and changes accordingly. This feature helps you gain a sense of the remote musicians’ positions in relation to you within the virtual space you all share.

Mix Control

The mix control feature allows you to change the mix of your instrument and/or vocals with those of the remote musicians by tilting your head in the direction where you want to concentrate the sound of his own instrument. For instance, when you tilt your head to the left, you will be able to hear what your instrument and/or vocals sound like when mixed only with those of the musician whose monitor is to your left, coming through your left headphone. The instrument and/or vocals of the musician whose monitor is to your right will continue to play unaccompanied through your right headphone. The converse holds true if you tilt your head to the right. This musician allows you to hear what your instrument and/or vocals sound like when mixed with those of the remote musicians’, one at a time.

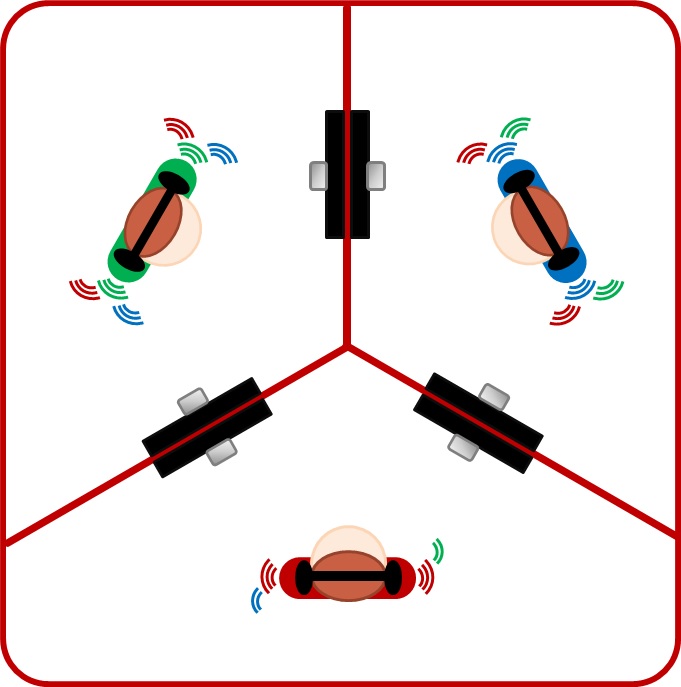

Track Panning

The Track Panning feature allows you to pan between the tracks of the two remote musicians by turning your body towards each of the monitors. When you turn your body towards the monitor to your left, your will be able to hear that musician’s instrument and/or vocals solely through your left headphone. The instrument and/or vocals of the musician whose monitor is to your right will then go quiet in both headphones. The converse holds true when your turn your body to the right. Your instrument and/or vocals will continue to sound the same through both headphones throughout.